I Built a Telegram Bot That Writes My Ultrasound Reports From Voice Notes

For a while, I was running an ultrasound clinic without a typist. There was no one in the room to dictate to. I had to scan the patient, talk to them, and then write the report myself, in a clean structured form, before the next patient walked in. Even with templates, writing a single ultrasound report takes time. The hard part was the context switching. Every patient cost me a few minutes of typing that I would rather have spent looking at the screen or talking to the next person.

I did not want to buy a commercial dictation system. I did not want to integrate with anything. I just wanted a fast, minimal solution that I could put together in a weekend and start using on Monday morning.

This is what I built, and how I think about it.

The 30-Second Version

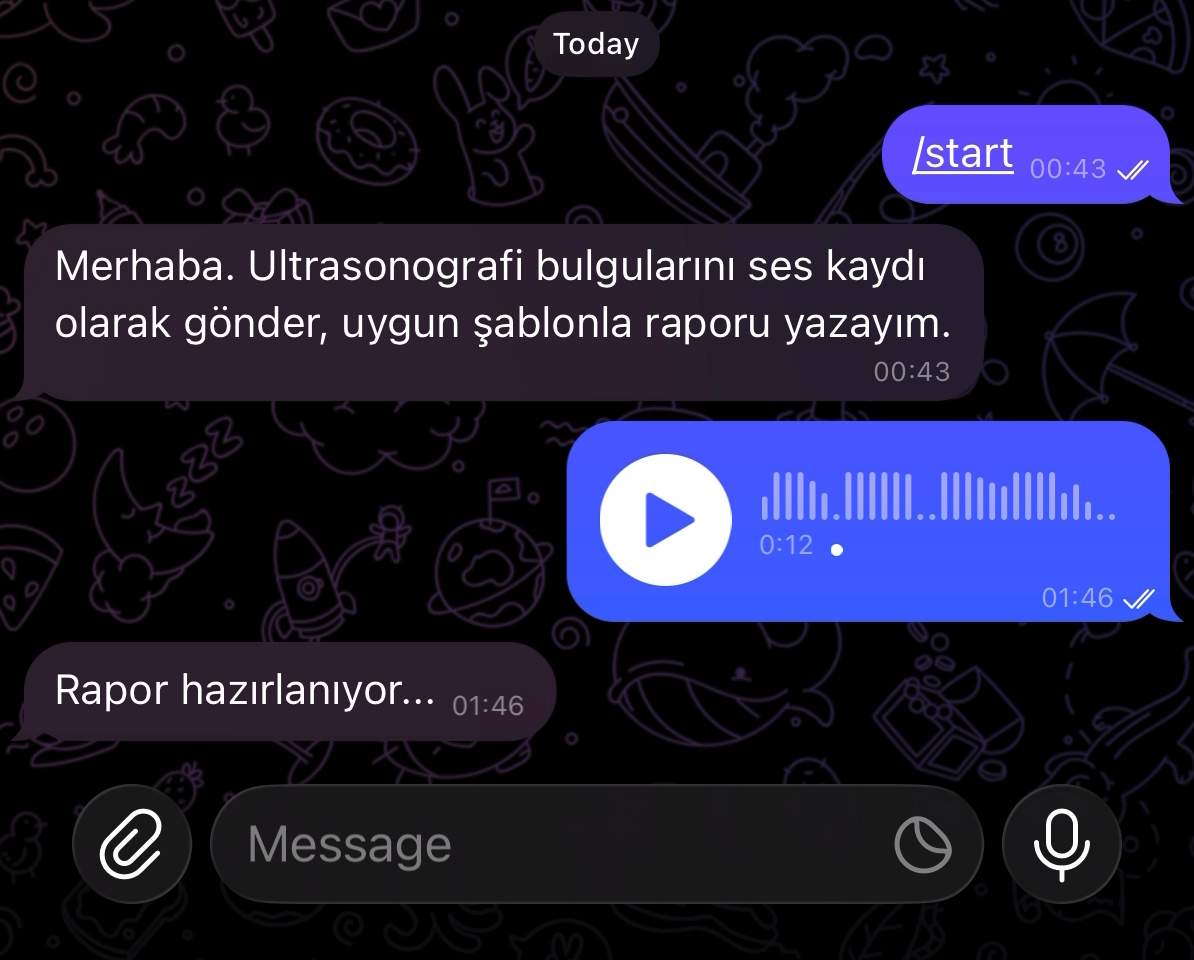

I send a voice note to a private Telegram bot. The bot transcribes my voice, picks the right report template from a list of about 50 ultrasound studies I prepared, fills in the findings I described, and sends back a clean, formatted report in Turkish. I copy it, paste it into our reporting system, do a final check, and sign it.

End to end, from speaking to seeing the formatted report, takes maybe 10 to 15 seconds. I can dictate while still walking to my desk between patients.

Why Telegram

This was the first decision and probably the most important one. I did not want to build a UI. I did not want to write a mobile app, and I did not want to set up speech recording in a browser.

Telegram already does both things I needed. It has voice notes built into every client, on every platform. And on my office desktop I keep Telegram Web open in a tab, so the response from the bot appears on my big screen by the time I sit back down. By the time I am at the keyboard, the report is already waiting.

That is the entire user interface. A voice note in, a formatted report out. No login screens, no recording buttons, no upload progress bars to design.

How the Pipeline Works

There are three pieces inside the bot, and each one does exactly one thing.

Step 1: Speech to text. The voice note is transcribed by Whisper, OpenAI’s open speech recognition model, running locally on my Mac mini. I use the Apple Silicon optimized version (mlx-whisper), which is fast enough that a one-minute dictation comes back in a few seconds. No audio leaves the machine. I also feed the model a list of common Turkish radiology terms as a hint, which dramatically improves accuracy on words like “lenfadenopati” or “doppler” that generic models tend to fumble.

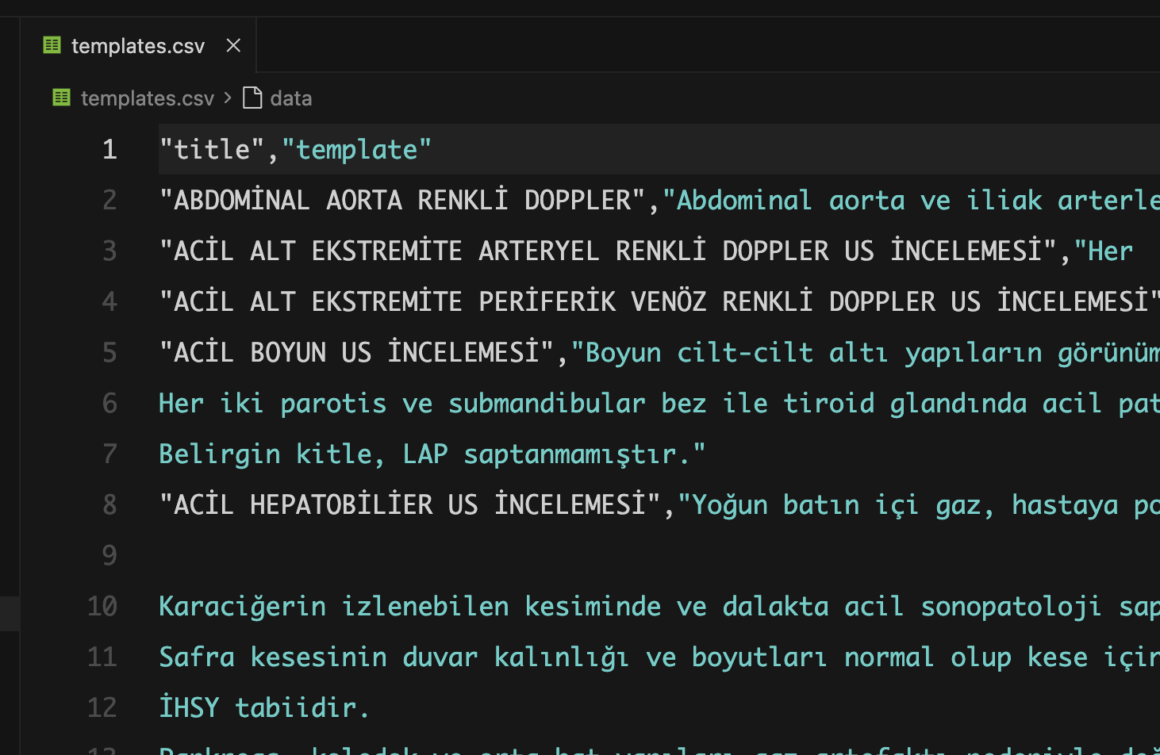

Step 2: Template selection and filling. The transcript goes to a large language model (Claude). The model has access to my full library of ultrasound report templates, which I wrote once and stored in a simple spreadsheet. Each template covers a single study type: thyroid US, abdominal US, lower extremity venous Doppler, and so on. About 50 of them.

The model’s job is narrow on purpose. It reads what I dictated, picks the right template based on the study I named at the start, and modifies it according to my findings. If I said nothing about pathology, the standard normal template comes back unchanged. If I said “right thyroid lobe contains a 6 mm hypoechoic nodule,” that line gets added in the right place and the rest of the template stays exactly as I wrote it.

This template-first design matters. I am not asking AI to invent a report. I am asking it to apply my own pre-approved language and only deviate where I told it to. The wording is mine. The structure is mine. The model is just the typist.

Step 3: Telegram reply. The bot sends the report back as a message, with the title and footer in bold. I long-press, copy, paste into the reporting system, glance over it, sign.

A Few Details That Made It Work Better

After using it for a while, a few small touches turned out to matter more than I expected.

Multiple studies in one voice note. Patients often arrive with more than one request, like a thyroid US and a neck soft tissue scan in the same visit. Instead of forcing me to send two separate recordings, the bot handles a single voice note that mentions multiple studies and returns all of them stacked in one reply. This was the moment the tool started feeling natural rather than mechanical.

Package templates. Some studies are really bundles, like a lymph node screen that combines neck, axillary, and inguinal ultrasound into one report. I marked these with a “PAKET” suffix in my template library and the bot knows to expand them into their constituent sub-reports automatically.

A device line and a closing note. Every report ends with the device used and a standard liability disclaimer. Both are configurable from inside Telegram with simple commands, so if I move to a different machine or want to tag a study as suboptimal, I update them in five seconds without touching code.

A reload command. I add new templates to the spreadsheet whenever I notice I am writing a new study type repeatedly. A /yenile command tells the bot to re-read the file without restarting anything. This sounds trivial but it removed the last bit of friction that would have made me put off updating templates.

What About the Cost

Running Whisper locally is free. The language model calls cost a few cents per report, mostly because the prompt includes the entire template library. I use prompt caching, a feature where the model remembers my templates between calls, so after the first request of the day each subsequent call is significantly cheaper. In practice, my monthly bill is in the noise.

You could go further and replace the language model with a small local one, like one of the open weights models running on an Apple Silicon machine. The cost would drop to zero and the data would stay entirely on your hardware. I considered this and decided against it for now, because tuning a local model to handle Turkish medical terminology and template selection well would have taken me a weekend by itself, and I wanted something working on Monday. If you have time and care about full local privacy, this is a reasonable next step.

How I Actually Built It

I will be honest: I did not type most of the code. I described what I wanted to Claude Code, an AI coding assistant, and we built it together over an evening. I told it the workflow I had in mind, made decisions about the stack (Python, Telegram bot library, Whisper, Claude API), and corrected the output as we went. The total amount of code is small, around three hundred lines spread across a handful of files.

This is worth saying out loud because the bar for building tools like this has dropped enormously. You do not need to be a software engineer. You need a clear idea of what you want, the patience to test it on yourself, and a willingness to iterate. The actual programming is increasingly the easy part.

Notes for Other Radiologists

A few honest observations from using it.

It is a productivity tool, not a medical device. I read every report before signing. The model occasionally picks the wrong template if I mumble the study name, and very rarely it phrases something in a way I would not. I treat it the way I would treat a fast but green typist: useful, mostly correct, never trusted blindly.

No patient data goes to the cloud unless you let it. In my workflow, I never dictate patient identifiers. Names and IDs live in the hospital system; the dictation is purely the radiology findings. The report comes back and I match it to the patient manually. This keeps the whole pipeline anonymous by construction.

The template library is the actual product. The bot is just plumbing. The reason the output reads like me is that the templates are mine, written from years of habit. If you build something similar and skip this step, the model will produce generic radiology prose that you will spend more time editing than you saved. Spend the afternoon writing your templates first. Everything else falls into place after.

Start with one modality. I only do ultrasound in this bot. CT and MRI would need their own templates and probably their own prompt tuning. Doing one thing well is enough.

The Point

This is not a polished product. It is one radiologist solving one specific annoyance in his own day, with tools that happen to be good enough now to make a weekend project useful in real clinical work. The interesting thing is not the bot itself. The interesting thing is that the cost of building exactly the small tool you need has collapsed to almost nothing, and a lot of the daily friction we put up with at work is now solvable by the people experiencing it.

If you are a radiologist who has been thinking “I wish there was a thing that just did X for me,” there probably can be. You just have to describe X clearly to a coding assistant and try it.